Hubitat's button implementation differs from ST's, in fact we do not have a capability button implemented in our apps.

We didn't like it, so we abandoned it altogether...

We have replaced "button", with one mandatory capability "PushableButton", and two optional add ons, HoldableButton and DoubleTapableButton

PushableButton:

/*

PushableButton (replaces button)

driver def:

capability "PushableButton"

commands:

push(<button number that was pushed>)

attributes:

NUMBER numberOfButtons //sendEvent(name:"numberOfButtons", value:<number of physical buttons on the device>)

NUMBER pushed //sendEvent(name:"pushed", value:<button number that was pushed>)

app

device list input type:

capability.pushableButton

subscription:

subscribe(deviceList, "pushed", buttonHandler) //all buttons

subscribe(deviceList, "pushed.1", buttonHandler) //button one only

notes:

an event of numberOfButtons should be sent on driver updated, this allows an app to determine the the button count if desired.

*/

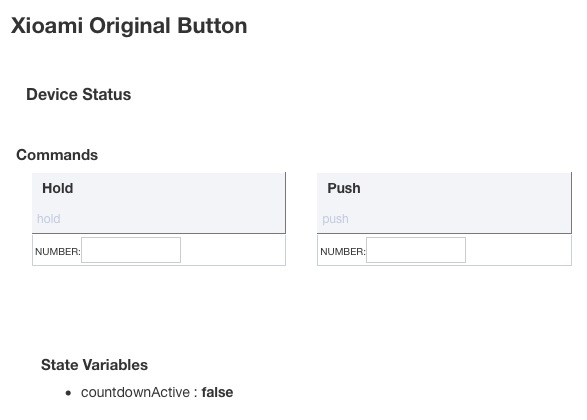

HoldableButton:

/*

HoldableButton (used in addition to PushableButton if device supports a hold function)

driver def:

capability "HoldableButton"

commands:

hold(<button number that was held>)

attributes:

NUMBER held //sendEvent(name:"held", value:<button number that was held>)

app

device list input type:

capability.holdableButton

subscription:

subscribe(deviceList, "held", buttonHandler) //all buttons

subscribe(deviceList, "held.1", buttonHandler) //button one only

notes:

this capability was not intended to be used separately from pushableButton, all holdableButtons are expected to include and implement pushableButton

*/

DoubleTapableButton:

/*

DoubleTapableButton (used in addition to PushableButton if device supports a this function)

driver def:

capability "DoubleTapableButton"

commands:

doubleTap(<button number that was double tapped>)

attributes:

NUMBER doubleTapped //sendEvent(name:"doubleTapped", value:<button number that was double tapped>)

app

device list input type:

capability.doubleTapableButton

subscription:

subscribe(deviceList, "doubleTapped", buttonHandler) //all buttons

subscribe(deviceList, "doubleTapped.1", buttonHandler) //button one only

notes:

this capability was not intended to be used separately from pushableButton, all DoubleTapableButton are expected to include and implement pushableButton

*/

ReleasableButton:

/*

ReleasableButton (used in addition to PushableButton if device supports a this function)

driver def:

capability "ReleasableButton"

commands:

release(<button number that was released>)

attributes:

NUMBER released //sendEvent(name:"released", value:<button number that was released>)

app

device list input type:

capability.releasableButton

subscription:

subscribe(deviceList, "released", buttonHandler) //all buttons

subscribe(deviceList, "released.1", buttonHandler) //button one only

notes:

this capability was not intended to be used separately from pushableButton, all releasableButtons are expected to include and implement pushableButton

*/