Looking forward to any tool you can offer in the next build, thanks. Just FYI I've bumped into several more similar (if not identical) cases even through this community forum. Cron (internal) is really not easy to manage (without system access to the service which is a conceptual base for hubitat)

"updateCheck" is a method I have been using in the code I maintain.

Note the upperCase C in the name. Your log is showing "updatecheck" which isn't mine, as far as I know.

I've been retiring "updateCheck" as opportunities arise. Most recently, it was removed from Aeotec Multisensor 6 driver.

Thanks for the idea. Seems like updateCheck was removed at v2.1.0. Well, "updatecheck" method is from something else, indeed.

I've spent a substantial amount of time trying to developer a driver code with this method in order to remove the orphaned job. All in vain:-(.

So the only solution I see here is for Hubitat to be able to manage this type of faulty jobs, either on its own or giving a user a tool for manual cleanup. Will wait for it in the next build...

In that code, updated() does an unsubscribe() and was, a release ago, followed by a schedule(..). I removed the schedule(..) as well as the updateCheck method. So... clicking on Save Preferences should have cleared it out. The mechanism to get into this state is a puzzle for me. I'd think the driver would have to be swapped, probably for a built-in, leaving the schedule(..) behind with no method to call. I don't see how that would then leave the device name empty. IF it's an App, then deleting the app could do that but the platform would do an unsubscribe() as part of the delete, wouldn't it? If it doesn't this would be a much bigger problem than just you ![]()

The orphaned scheduled job you have is something we haven't seen before, and obviously it would be best if such a thing could never arise (if we knew how it arose we'd fix it).

It's pretty easy for an app to manage Cron for itself. Two sides to it, establishing a schedule and removing a schedule (changing one being accomplished by remove and re-establish). The hub method schedule(cron-string, myScheduledMethod) allows the schedule from any cron string to be established. The hub method unschedule(myScheduledMethod) removes any schedule that references that method. Refined control by these two methods does imply using a separate scheduled method for any schedule that might require individual management, but generally this is not a burden for an app.

Full direct cron management by a user is not supported. But it's not a design objective of the hub to put this sort of feature directly in users hands. The hub's design relegates schedule creation and management to apps and drivers.

I've had this problem before, with some old build. It got self-resolved after another upgrade which made me happy at the time 'cause the db was heavily growing daily and negatively impacting the hub performance. Back in times it wasn't that easy to clean db and to see scheduled jobs via ui (had some scripts for that afair).

Here's how see the issue now:

- Short term solution is to get rid of the orphaned job. This might only be a special hub advanced command/tool that might cleanup cron schedules disregarding the jobs namespace.

- Long term - find the cultpit. If the issue arises after an upgrade of my system in particular, I'm going to watch another one carefully.

Corrupted CRON is not an new problem to this community. It's rebuild/cleanup is certainly a helpful tool to anyone impacted.

Also, I've caught the idea of an orhraned schedule job ("no name") right here, at community.hubitat.com. Just can't find the right thread a.t.m.

Would you know when the next build is scheduled to be released publicly? If I can get help with db/cron cleaning tool before the next build release, it'd be awesome. I've really tired of non-operating scheduled jobs at my hub

We don't have a date at this time. It will go to beta at some point, prior to public release. That has not happened yet.

Appreciate a new feature with the new build - delete scheduled jobs (individually) via ui. Cleaning uo all jobs didn't help my crob to return back to functional state, unfortunately, the service is still not running. Not sure even how to get visibility into that internal service for further troubleshooing:-(

It doesn't look as though there's a current endpoint that I can see if there's an update pending.

http://IP/hub/update

doesn't appear to work anymore on 2.3.9.177

<h2>Error 404</h2>

<h3>The page requested could not be found</h3>

That endpoint has only been around for a few weeks, so yes it works. But not as you have pasted it here verbatim.

“tokenValue” needs to be replaced with the actual hub management token value, which is fetched by first entering a different endpoint.

See this post that describes how these new endpoints work.

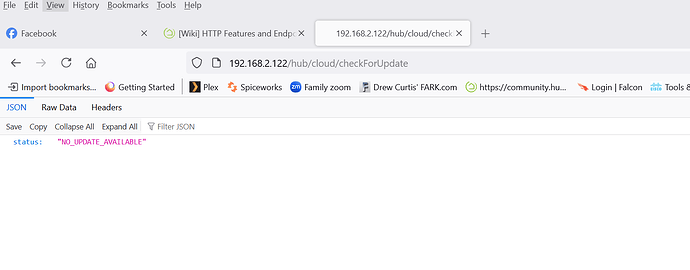

http://<hubIp>/hub/cloud/checkForUpdate still works

I have found that using "/hub/cloud/checkForUpdate" in RM now occasionally generates an error, something like this:

java.net.SocketTimeoutException: Read timed out on line 7112 (method allHandlerX)

I believe that when I use it in an app or driver I normally use a timeout value of around 600 to eliminate that issue - not sure how you'd do that in RM.

I can check for an available update:

http://<hubIp>/hub/cloud/checkForUpdate

but is there a way to hands-off trigger said update?

M.

Yes, see the endpoints added in 2.3.9.176:

Oh that's great. I guess I had missed that. Thanks!

M.

Does anyone know if there is an endpoint for creating a new local backup?

Edit: Answered my own question - http://hubitat.local/hub/backupDB?fileName=latest will not only download the latest backup, it will also create a backup first and then download it.

Getting "Not found" for many of these....