Not me.

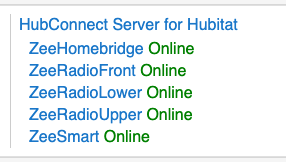

I only have 4 Hubitat hubs in my Production system:

I only have 4 Hubitat hubs in my Production system:

(Homebridge and ST won't go through the NodeJS server in this iteration, if ever.)

I'm not sure "speed" is a measurable criteria...

The point of the NodeJS server is to reduce the number of packets that need to be discarded... it does the discarding instead of the hub. That increases 'available CPU' to anything else the hub needs or wants to do. Which might be nothing. For small'ish (small relative to using multiple hubs  ) systems, the 'cost' of discarding may not cause any other action to be delayed.

) systems, the 'cost' of discarding may not cause any other action to be delayed.

Let's imagine you have 20 devices on one hub, you mirror 15 of them to the server and it's got 20 additional devices mirrored from hub #3. In that scenario, hub #1 is going to be receiving 20 devices worth of event socket packets it must discard. 20 is nothing  easy for the hub. At some point however, 60?? 120? the simple act of receiving the packet will delay something else. If that's a Z-device packet, then perception of delay might occur. It's all relative to the CPU available at the moment. The Hub has a LOT of CPU, but for any given millisecond, all cores might be busy. By offloading those packets, CPU isn't being consumed in a 'do nothing' exercise.

easy for the hub. At some point however, 60?? 120? the simple act of receiving the packet will delay something else. If that's a Z-device packet, then perception of delay might occur. It's all relative to the CPU available at the moment. The Hub has a LOT of CPU, but for any given millisecond, all cores might be busy. By offloading those packets, CPU isn't being consumed in a 'do nothing' exercise.

Ticket submitted...

Ticket submitted...