If it isn't updating via webhook it is almost always a POST url config issue or a host firewall issue on the machine running node-red.

99% of the time it is the POST url. Users either put in the wrong IP, forget to put in the port, or other wrong info. Clicking "Confgure Webhook" only checks that it was successfully written to hubitat - not that the URL is actually correct.

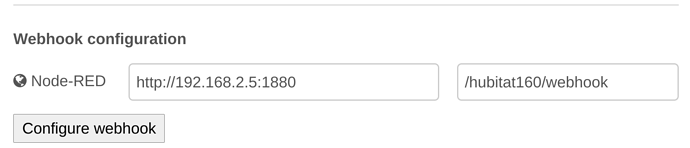

Example from one of mine.

192.168.2.5 is my NODE-RED IP (not hubitat).

1880 is my NODE-red port (the default port #)

Also make sure you actually type in the ip/port info! The config node will show what it thinks should go in there in 'grey', but it still needs to be typed in and the config node updated.