Am looking for a motion sensor that will only trigger on human motion. At first I was hoping that EufyCam 2 would be a solution but its motion zones and AI are not used when triggering a motion into HomeKit. ( I bridge the HomeKit sensor to Hubitat via homebridge (HOOBS) and the MakerAPI plugin.) I don't need a camera solution as I already have that and get access to whatever the EufyCam detects within the Eufy app, but I do need to be able to trigger my Hubitat chimes and lights without getting the false positives which I am currently dealing with due to the lack of motion zones and human detection AI.

I don't think there is an AI motion sensor out there that will work with HE. Most Human detectors need a camera to distinguish between human and other objects. You can try blue iris.

Camect can turn a camera (Wyze, Amcrest, etc) into AI-detecting devices. And you can link that to Hubitat ([Release] Camect Connect)

I just managed to set something similar up using tinyCam and a new driver still being developed.

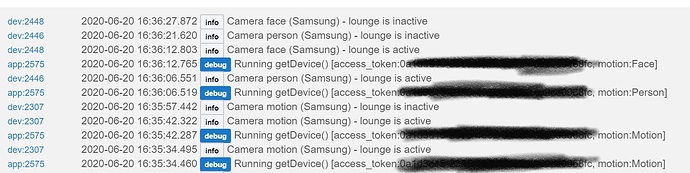

Basically, put your cams into tinyCam running on a tablet, old phone or PC that is in the home and on 24x7. It supports a huge range of cams. Set the motion triggers for your camera(s) to be on for motion, object (person, vehicle, pet) and for face (non-personal). Then use the Webhook option in each camera's settings panel to send the notification to your hub (you get the specific url required from the app, details in another post, below). If you want to go to other apps other than tinyCam on the tablet/phone/PC and keep the motion detection running, be sure to activate the 'background mode' for whatever cameras you like. Finally, activate the tinyCam webserver which will allow you to view your cams from a dashboard using an html tile (you can find that driver with a search in this forum too). This uses your tablet/phone/PC as a local server and will provide another url to use for an HE dashboard html tile or any other use you may have to view the camera.

Then in Hubitat, set up virtual motion sensors - one for each motion type wanted from each camera (eg.,motion, person, pet, vehicle, face). Then load the app from the post below, select what virtual motion sensors you want to activate from this camera, name the app as needed (to create an instance specific to each camera). Remember to activate oauth when you install the app. This will generate the oauth token for you which gets displayed in the app. These are what you then use in the webhook in tinyCam to send its motion notification to the appropriate app you just set up in HE.

Yeah, it sounds complicated but it really isn't. I need to make a post that explains it with screen shots, step by step ![]()

Here's where you can find the app. Give me a few minutes to post the latest version with the sensor selection....

BlueIris has an additional piece that has AI for humans only. This route is more effective if you have security cameras though and not so much for just smart motion sensors.

Can Blue Iris AI do human detection at night? EufyCam 2 can't.

Using this, yes.

Again, it's a lot to setup just for that reason...unless you are wanting more cameras. Then it's a great buy!

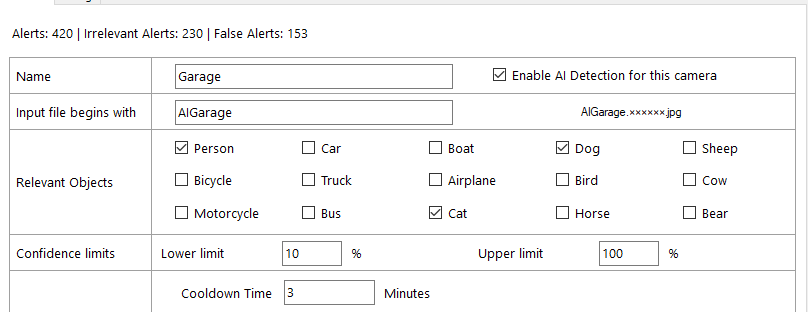

There is also a free AI integration for Blue Iris using the DeepStack AI and AI.Tools which works great as well the Sentry AI is much easier to setup though, however the free option doesn't take a long time to setup. I use my Blue Iris cameras with the DeepStack AI to set virtual motion devices on my Hubitat via MQTT and it works great eliminating probably 95% of false alerts. The thing that makes it hard to get it to 100% true alerting is if you have motion in the monitored motion zone and someone happens to be outside of the monitored zone but still in the picture that gets sent to the AI for processing you will get a false alert from either AI system since there was a person in the picture as the AI isn't told what the zone is.

Ouch, that's weak. In the tinyCam deployment the in-app object processor conforms to the zone setting for that camera. There's also a sensitivity slider for each of the options (motion, person/pet/vehicle, face) which provides some degree of control over false positives.

AI.Tools lets you choose between a lot of different object types as well. I haven't tested them all as I have never seen a cow, horse, bear, ... on my cameras.

Are any Blue Iris cameras wireless without using WiFi, e.g. my EufyCam's use their own radio technology from camera to base (then ethernet to my LAN)? My WiFi isn't always up.

Blue Iris can use almost any camera that conforms to RTSP or ONVIF so maybe (there are hundreds of manufacturers out there that make compatible cameras), I only use POE cameras with Blue Iris (I've been lucky in finding a way to get Cat5e or Cat6 cable everywhere I've wanted a camera so far, although some have been a challenge) for reliability even though my WiFi is very reliable since I installed 3 Ubiquiti APs a few years back. Actually my shed cameras while they are POE, the switch they are connected to gets it's signal from WiFi via an AirGateway Pro but I don't use any devices that have their own proprietary radio signal.

Is there a topic on this on ipcamtalk.com?

Yes just search for "GentlePumpkin deepstack tool tutorial"

One thing I have not yet looked into regarding EufyCam 2 is that it supports RTSP (Real Time Streaming Protocol) to NAS (Network Attached Storage). If I use a NAS instead of Eufy HomeBase 2 could I connect that NAS to Hubitat? Synology is the NAS I see often mentioned in connection with EufyCam 2. What might the implications be with respect to motion detection, AI, AI at night, motion zones. EufyCam has AI and motion zones but not AI at night. Also, I don't know how many Eufy features would be passed through to the NAS.

Has anyone seen the new Blue Iris update with local deepstack support?

I may fiddle with setting that up this weekend, as I could not get DeepStack to work previously.

Would be sweet if can setup Blue Iris to report to Hubitat when positive ID is triggered!

It works great, just make sure if you have DeepStack currently installed to uninstall it then download the CPU or GPU version from Using DeepStack with Windows 10 (CPU and GPU) | DeepStack v1.2.1 documentation and install it in the default path. If you install the GPU version note the dependencies. After that its as simple as enabling it in the AI tab of the global settings then setting up your cameras AI settings on the trigger tab per profile. There is no need for duplicate cameras as in the old DeepStack implementation instructions.

Can't be 100% on this, but I'm fairly sure I don't get any dodgy false alerts with these. I have one setup in every room and a few in hallways etc.

Cheap as chips. Work ace.

Never rated any of my zigbee/zwave battery powered ones. They were awful.

12v standard Pir's all the way. Connected via node mcus/konnected.