I know it's been a while, but I was finally able to carve out some time to embark on a deep dive research project, and the results were quite surprising.

In my current setup I have 20 Kasa devices, mostly plugs (a mix of HS103, KP125 and EP10) and 3 ES20M motion dimmers. 2 of the KP125s are used for energy use monitoring.

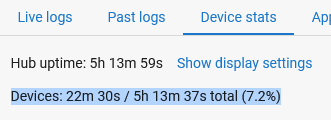

Hub (C-7) load is very reasonable:

Local apps: 1h 31m 47s / 4d 12h 53m 4s total (1.4%)

Devices: 2h 2m 1s / 4d 12h 53m 17s total (1.9%)

As a first step, I tweaked your driver code to log callback latency of getSysinfo(), getRealtime() and setRelayState(), and keep track of the number of "slow" responses (>1s) vs "normal" responses (typically 120-140ms). I also increased the callback timeout from 9s to 30s, just to see if it does anything.

All the devices are configured with a 10-minute refresh interval, except for KP125s, that are on a 1-minute cycle.

- Surprise #1: every single device was showing those latency issues, with anywhere between 15% and 30% of responses taking longer than 1s.

- Surprise #2: While most slow responses were about 4-6s, occasionally latencies would jump to absurd levels:

dev:1028 2023-12-19 16:10:30.511 warn Curio Cabinet (left)-2.3.6: High Sysinfo latency: 22949ms

- Surprise #3: KP125 devices that were getting 20x the requests, showed a much lower percentage of slow responses (1.2%-1.8%), but in absolute numbers, they were comparable to the rest of the devices. Logs also confirm that the absolute majority of those slow responses were observed during those once-every-10-minutes refresh cycles. Also in many cases the "backlog" would clear in batches:

dev:865 2023-12-19 07:01:00.154 warn Guest Bathroom-2.3.6: High Sysinfo latency: 2133ms

dev:1027 2023-12-19 07:01:00.082 warn Curio Cabinet (right)-2.3.6: High Sysinfo latency: 2027ms

dev:835 2023-12-19 07:01:00.076 warn Reading Light A-2.3.6: High Sysinfo latency: 4053ms

dev:837 2023-12-19 07:00:51.285 info Living Room Receiver-2.3.6: Emeter latency: 123ms

dev:837 2023-12-19 07:00:51.158 info Living Room Receiver-2.3.6: Sysinfo latency: 137ms

Which leads me to believe that the root of the issue is the way Hubitat is handling callbacks. Without knowing the actual implementation details it's pure conjecture, but it feels like once the response packet arrives, and HE decides that it can't process it right this moment, for whatever reason, it kicks the proverbial can further down the road, i.e. reschedules the processing to 1 second later or something like that.

To completely rule out the devices themselves, I set up HomeAssistant with nothing besides a Kasa integration, which polls devices very aggressively (every 5 seconds), and didn't get a single hiccup over the 24 hours period I had it running, confirming that it's not the devices.

As it was running, I was fully expecting the symptoms to get worse on the Hubitat side, but, interestingly enough, it didn't make any difference in the overall rate of slow responses.

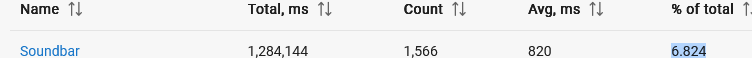

Nothing in the apps/drivers stats stands out as particularly slow or a resource hog, so I'm still curious, what could possibly cause these "stop the world"-like events. It's hard to pinpoint whether it started after one of the hub firmware updates a few months back, or if it's simply the number of devices managed by a single hub has reached a critical mass? I have quite a few, about 50 Z-Wave and 60 Zigbee devices in addition to 20 Kasa devices.

In the end, it's not very difficult to work around this issue using a virtual device, but it would be a lot cooler if I didn't have to